Multiple r squared xlstat

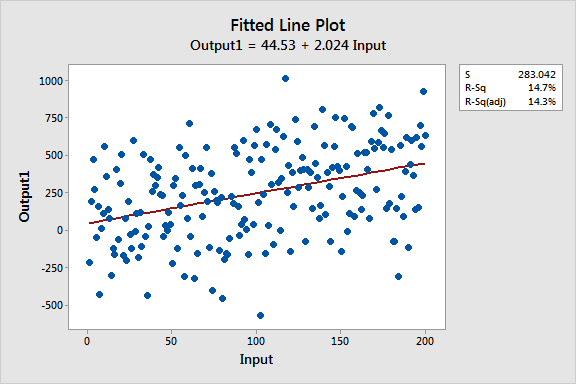

If you still want to use the regression model, you absolutely must include the R**2 values. If it looks like a scatter plot, your model isn't going to be a very good predictor. I suggest starting with a simple x-y plot of your data. Model selection bias and Freedman’s paradox.

Lukacs, P.M., Burnham, K.P., Anderson, D.R. If you are looking for predictive power it is even better to first apply partial least square regression or reduced rank regression and extract the most important factors.

The wouldn't tell you whether you have autocorrelation, influence statistics or any other problem that distorts the analysis. The problem with these stats is that they assume the model is correctly specified. Akaikes's or Bayesian) to select the best subset model for your data. Instead you could check measures of "lack of fit" (e.g. variance inflation).Īnyway, judging an analysis based on R2 is a serious error we know that R2 is a very bad measure of goodness of fit. outliers, leverage points) and collinearity (e.g. Please first check for influence statistics (e.g. In addition, just one influential value in your data can distort the R2. It also depends on sample size with the same number of predictors you increase the sample size, R2 values gradually decrease. A small R2 also does not mean poor explanatory power. You can also increase the R2 if include a predictor even if it has nothing to do with your response variable. 0.999) as the number of predictors in your model approach the sample size (n). For example, you can get a very large large R2 (e.g. It can be grossly misleading in some situations. R2 is one of the most abused metrics in judging goodness of fit in multiple regression analysis. Even if a combination of predictors representing modifiable behaviors predicted just a small amount of the variance in an important health outcome, that could be very important. And a small r-square could have important implications. I am not giving you any kind of a standard, but I wouldn't find an r-square of. Having a stressful job doesn't predict individual stress that well because individual responses to stress vary a great deal. Even something seemingly well related, such as suicidal ideation doesn't predict actual suicide attempts that well. But if it is some kind of human behavior, thought, or feeling, it is extremely unlikely that unless your predictors are very closely related to the outcome, in other words, nearly the same thing,you are going to be able to predict probably even half of it. For example, if it is a psychological scale, part of it is always going to be measurement error. It's important to realize that the outcome could be made up of a lot of things. It really depends on what you are trying to predict, what your predictors are, and how reasonable it is that you can predict that. In pretty much any field I work in I would never expect to see an r-square of 70%. Hi Abu, although I suppose I would be hard-pressed not to call 70% high, I don't think we should be caught in a trap of referring to r-square values as high or low without knowing much more. You can read about the difference between statistical significance and effect sizes if you want to know more.

But in the social sciences, where it is hard to specify such modes, low R-square values are often expected. As I said, in some fields, R-square is typically higher, because it is easier to specify complete, well-specified models. However, you should always report the value of R-square as an effect size, because people might question the practical significance of the value. You should note that R-square, even when small, can be significantly different from 0, indicating that your regression model has statistically significant explanatory power. You can cite works by Neter, Wasserman, or many other authors about R-square. You can generate lots of data with low R-square, because we don't expect models (especially in social or behavioral sciences) to include all the relevant predictors to explain an outcome variable. You need to understand that R-square is a measure of explanatory power, not fit.